Fixing Mixed-Content and CORS issues at ML Model inference time with Azure Functions

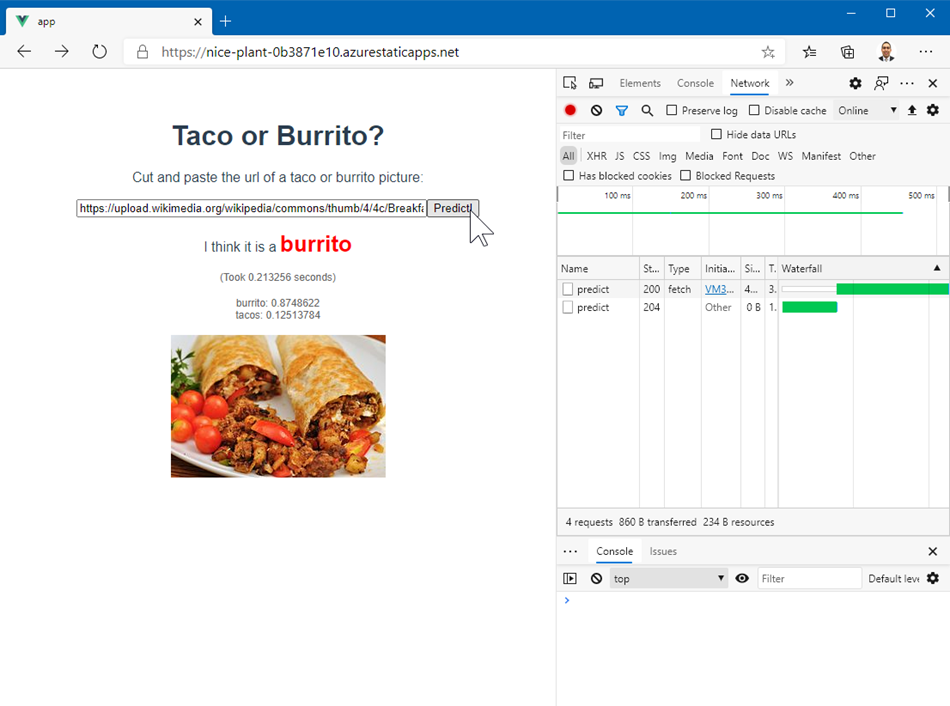

This is a follow-up from the previous post on deploying an ONNX model using Azure Functions. Suppose you've got the API working great and youwant to include this amazing functionality on a brand new website. What follows is the harrowing path through failure we all must take to eventually reach the glorious end: an AI that understands tacos an burritos like I do.

Mixed Content Issues

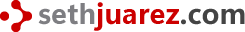

As soon as you deploy your site and bask in its new found glory you might run into something like this when you make the API call from JavaScript:

Calling an unsecured endpoint (the Azure Function using http instead of https) from a secured site is a no-no.

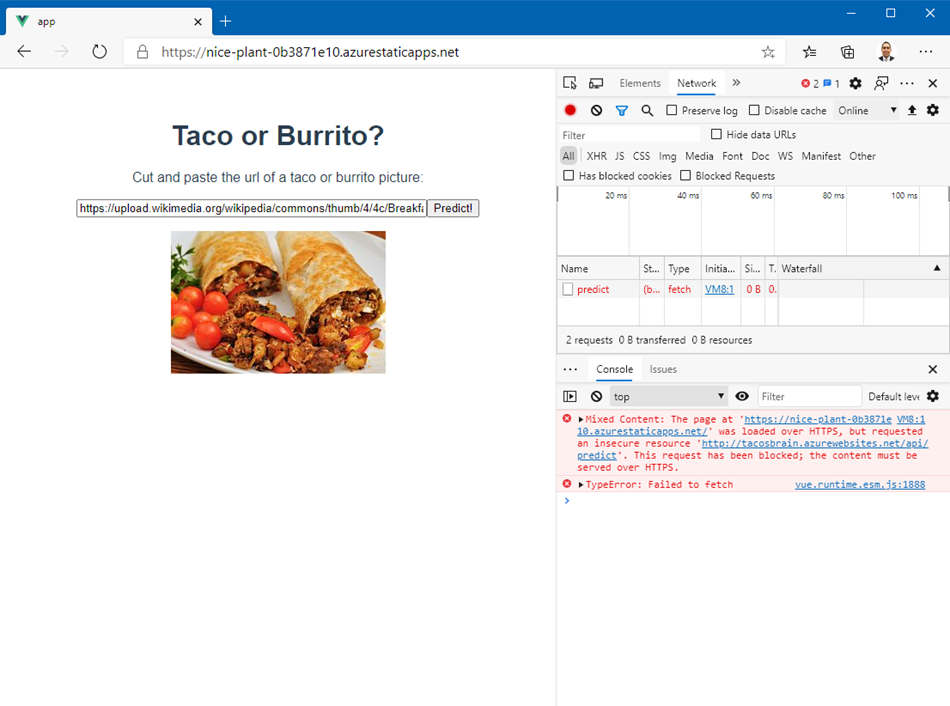

Luckily Azure Functions can also be called via https! If you want to have that as the default setting, its very easy to do:

Basically you go to the TLS/SSL settings and turn HTTPS Only to On to make sure all calls to the API are secured.

Fixing CORS Issues

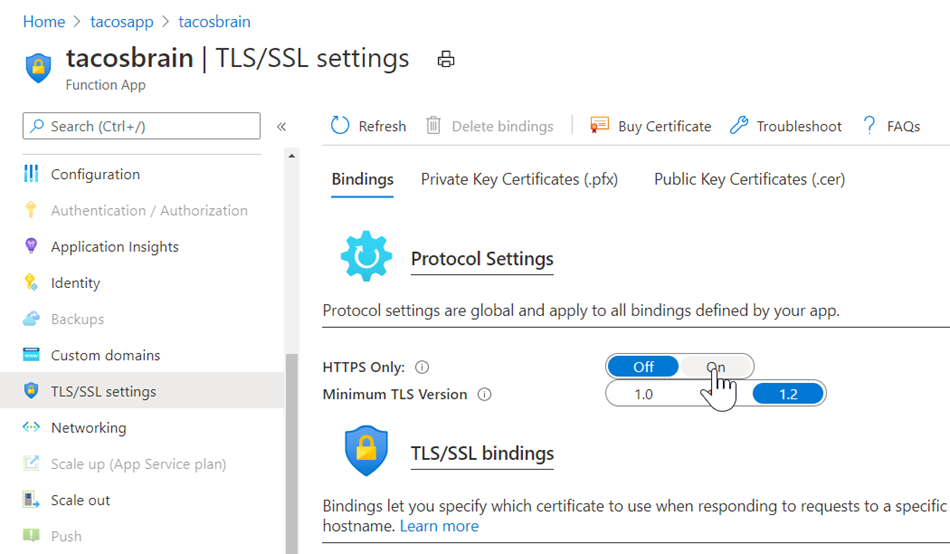

As soon as you fix this issue by adding the setting above and updating your code to point to an https API endpoint you will definitely run into this issue:

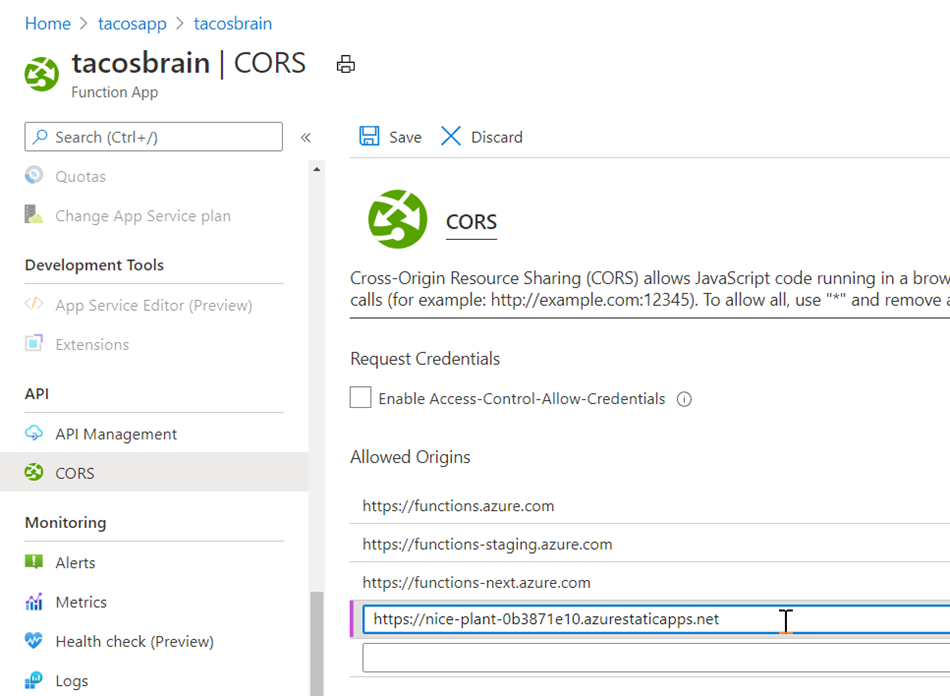

Our Tacos AI API is being protective over who can call it (as it should be). This is a security protection built into the web called CORS or Cross-origin Resource Sharing. In order to allow other sites (or origins) to use this resource (or share the API with others) we need to explicitly tell our API that this functionality is indeed permitted. Here's how you set this up in Azure Functions:

In the CORS settings we add the site that wishes to call the API and explicitly give it permission to share in the glory of our taco resource. As soon as we set this everything works!

What follows is what is actually happens behind the scenes.

Bonus Material

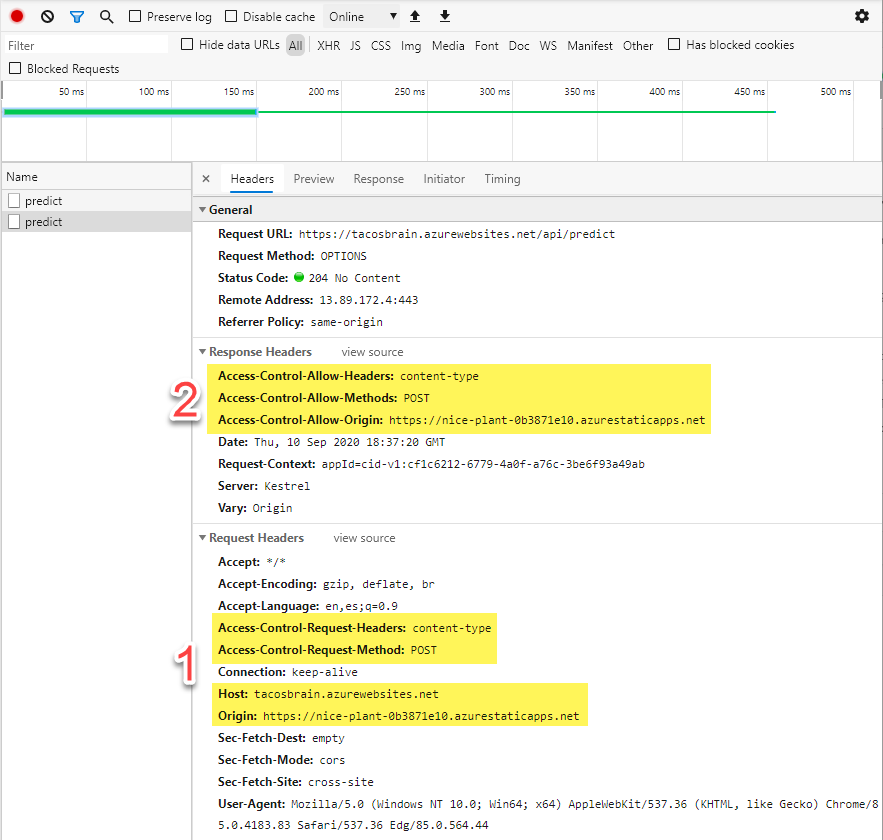

In the bonus section I thought I would explain how this all works. The first thing I hope you noticed was that there was two calls to the API. The second one was the regular POST call that we initiated. The first call though was an OPTIONS call (illustrated below).

I highlighted two important sections:

- This is the headers that were sent from our website to our API. Notice we are telling them who we are (Origin is our website and Host is our API) and what we want to do (we want to

POSTand will ask for some kind of content). - Our API responded that it will indeed allow the

POSTcall from the Origin and return the content we want.

This is basically the dance that happens to make sure everything is on the up-and-up.

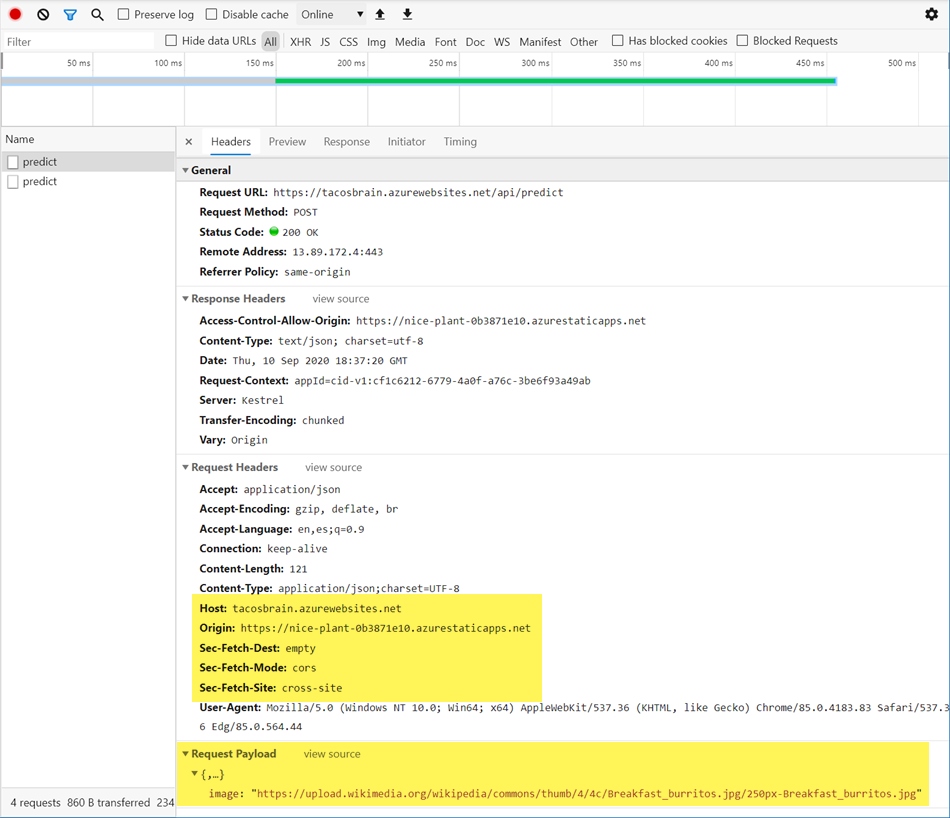

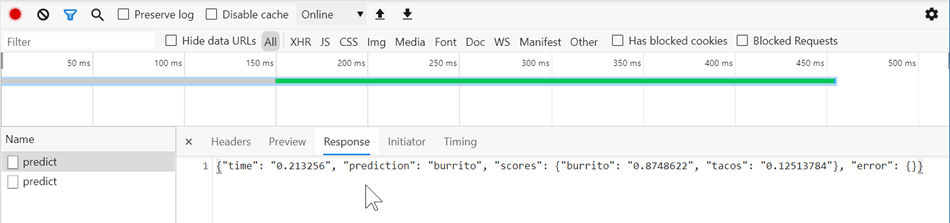

The second call is of course the POST call to send our API a picture of a taco or a burrito:

Again our website sends both the Origin (our site) and the Host (the API) as well as the json payload. Here we see the response in all of its glory!

Done! It all seems to work!

Summary

When I first encountered these issues I was super confused. In hindsight it makes sense to restrict sites that can call an API via JavaScript (and with what protocol) - after all, I wouldn't want folks to abuse my Tacos AI...

Finally, I know I spent zero time explaining how I built the actual static site: I will leave that for another time!

Reference

Now for some helpful references:

Your Thoughts?

- Does it make sense?

- Did it help you solve a problem?

- Were you looking for something else?